Unity Technologies reveals 2017 Unity product roadmap

Unity Technologies has unveiled its roadmap for the Unity game engine for 2017, showcasing features that will appear in the upcoming Unity 5.6 and beyond.

Highlights include a new VFX Image Sequencer tool, a new Timeline editor for cinematics, new post effects, a rewritten, 4K-capable video player, and EditorVR, a new in-VR editing environment for VR content.

In addition, OctaneRender, Otoy’s GPU-based renderer, will be integrated into every edition of Unity.

The announcements were made during the keynote of the company’s Unite Los Angeles 2016 conference.

OctaneRender running natively within Unity in a preview build of Unity 5.6.

In 2017: OctaneRender and ORBX to be integrated into every version of Unity

The biggest surprise from the two-hour presentation was undoubtedly the news that OctaneRender, Otoy’s physically correct GPU-based renderer – previously the preserve of visualization and VFX artists – will be integrated into every version of Unity, including the free Personal edition.

In his demo, which starts at around 01:18:00 in the video at the top of the story, Otoy CEO Jules Urbach showed off assets from Keloid, Big Lazy Robot’s cult VFX short, being manipulated in Unity in real time.

Changes are displayed in the built-in OctaneRender viewport at near-photorealistic quality.

The integration will also make it possible for Unity to render new types of previously offline-only content: notably volumetric effects in OpenVDB format.

“We think the future of cinematic rendering is going to be fundamentally transformed by this collaboration,” Urbach told the audience.

ORBX, Otoy’s next-gen video codec, will also be integrated into Unity, with the option to publish ORBX content to platforms that support it, including the HTC Vive, the Oculus Social app, Facebook’s 360 Videos and the Samsung Internet browser.

Unity Technologies hasn’t announced a release date for the OctaneRender integration, beyond simply “next year”, but the demo showed it running inside an early build of Unity 5.6.

In alpha: new Timeline editor for cinematic content

If OctaneRender integration looks likely to push Unity further towards the kind of rendering quality previously associated with its main rival, Unreal Engine, its new Timeline feature was straight from the UE4 playbook.

A new editing system for cinematic content, much like UE4’s own Sequencer, Timeline is a C#-based procedural camera and timeline system that plugs in on top of the existing Unity API.

According to Unity head of cinematics Adam Myhill, the new technology “works like you’re a director directing a camera operator”, making it possible to create camera moves and transitions without keyframing.

Instead, users simply drag clips on the timeline to repeat or retime them; to blend between procedural cameras – effectively creating new camera moves; or to retime transitions.

The system, which can be seen in action from around 01:12:00 in the video at the top of the story, also supports other procedural features, like random motion ‘noise’ to mimic handheld camera movements.

Unity hasn’t announced a release date for Timeline, but it has confirmed that the technology will be a free package in the Unity Asset Store rather than being integrated into the core software.

In public beta: new Image Sequencer tool for editing image sequences

Other upcoming Unity features geared towards cinematics and photorealistic content include the new Image Sequencer and a range of new or improved post-processing effects.

The former is a new tool for converting image sequences generated in third-party software – the keynote showed an explosion sim generated in Houdini – into single Flipbook texture sheets within Unity.

You can see it in action in the video above, or in more detail from around 00:45:00 in the keynote video.

Users can retime image sequences within Unity to fit into an arbitrary maximum number of frames; resize frames to powers-of-two multiples; or crop their borders to use texture space more efficiently.

Image Sequencer is currently in public beta. You’ll need Unity 5.5 beta 7 or higher to run it.

In public beta: new post-processing effects stack

Unity Technologies has also been working to add “the same kinds of [post-processing] tools that artists in the movie industry have” to Unity.

The new post-processing stack, an open-source collection of C# scripts and shaders, adds a new bloom effect and improved chromatic aberration and eye adaptation.

Depth of field has been completely rewritten, with the new implementation featuring sliders for real-world camera properties including Focal Distance, Aperture and Focal Length.

The demo, which can be seen at around 00:53:00 in the keynote video, also shows a real-time colour correction workflow using standard histogram and vector scope controls.

There is also a fast new temporal anti-aliasing system: according to Unity Technologies, on the PS4, it computes in under 1ms per frame at 1080p resolution.

The post-processing stack is currently “near the end of beta”, and is available from Unity’s GitHub repository.

In alpha: new Progressive Lightmapper for faster, interactive light baking

The company also showed off its new Progressive Lightmapper, originally announced at GDC earlier this year, and intended as a faster, more iterative solution for baking lighting.

“If there’s one area where we’re very aware that we have some work to do to earn artists’ love, it’s lightmapping,” admitted Unity Technologies technical director Lucas Meijer.

An unbiased Monte Carlo path tracer capable of baking out lightmaps with GI inside the Unity Editor, the new system provides real-time feedback on a bake in the form of a progressive viewport preview.

The Progressive Lightmapper is currently in closed alpha, with no public release date confirmed. You can read about it in more detail in this blog post.

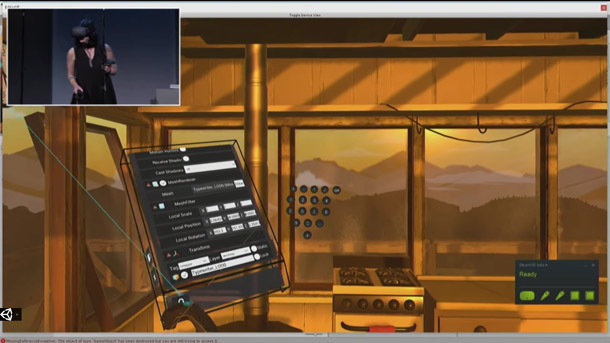

The interface for EditorVR, Unity’s new VR editing toolset, showing the float-out numeric keyboard.

In alpha, preview due in December 2016: new EditorVR toolset

Outside of graphics, another major new feature coming up in Unity is the EditorVR toolset – which, like Unreal Engine’s VR Editor mode, enables artists to edit VR content within a VR environment.

The demo, which you can see from around 01:47:00 in the keynote video, shows a scene from Campo Santo’s mystery/exploration game Firewatch being edited using an HTC Vive headset and controllers.

As well as moving assets around within the scene, users can bring up an in-VR Unity menu – a rotating cuboidal display with standard 2D Unity menus mapped to its faces – to access other controls.

That includes the Inspector panel, with the option to bring up separate floating keyboards – either a full keyboard, or just numeric input – to edit scene properties from within VR.

There is also the Chessboard, an intriguing in-VR ‘minimap’ of the scene, which makes it possible to navigate from point to point quickly.

Users can also open up multiple Chessboard displays and drag objects between them, making it easier to reposition large environment assets.

A separate Tools pane provides access to third-party add-ons: the demo showed a subdivision surface modelling tool and an implementation of real-time animation prototyping system Tvori.

While many artists remain to be convinced of the value of working in-VR in this way, with motion sickness and eye strain cited as common issues, Unity is bullish about the new technology.

“We think this will be genuinely useful for you [even] if you’re not making something in VR; if you’re just making something in 3D,” said Timoni West, principal designer at Unity Labs.

EditorVR is also open-source, and will be available as a compiled package via the Unity Asset Store. Unity Technologies expects to release a preview in December.

Unity 5.6 will feature a complete rewrite of the video player, geared towards 4K and 360-degree video.

In Unity 5.6, preview due soon: new 4K-capable video player

The company has also “completely rewritten” its video player, in part to support 360-degree VR videos.

The new player is hardware-accelerated and capable of smooth playback of 4K videos. It supports H.264, VP8 and other standard video codecs, and will be available in Unity 5.6, with a preview “soon”.

In Unity 5.6, preview available now: native Daydream support

Other new virtual reality features include native integration for Daydream, Google’s new mobile VR platform.

The Daydream View headset and controller, which is due to ship on 10 November, provides the kind of hand-tracking functionality previously only available on PC-based VR platforms to users of Android phones.

According to Google product manager Nathan Martz, Daydream users will be able to access the Google Play store from within VR and to make in-app purchases within VR apps right from launch.

Daydream will be supported as a deployment platform within Unity 5.6. A public preview is available now.

In Unity 5.6, preview available now: Facebook build support

Unity Technologies also announced a number of other new build platforms due in Unity 5.6, including Gameroom, Facebook’s new Steam-like Windows desktop gaming platform.

A build of Unity 5.5 with a beta version of Gameroom support is available via Facebook’s dev site.

Other new Unity build targets include Vuforia, PTC’s AR platform; the new Nintendo Switch portable console; and Chinese smartphone manufacturer Xiaomi‘s Android app store.

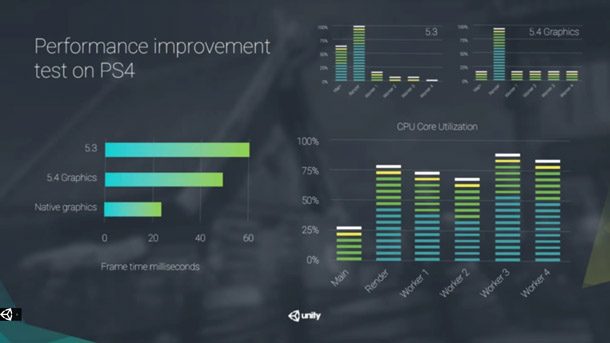

Improved multithreading will even out the allocation of render calls in future builds of Unity.

In Unity 5.6: under-the-hood improvements

As well as new functionality, Unity 5.6 will feature a number of under-the-hood improvements, including improved multithreading.

The functionality, which builds on work done in Unity 5.4 to move render calls out of the main thread, will enable rendering jobs to be more evenly distributed to worker threads.

Unity Technologies claims that this results in a speed boost of around 2.5 times over Unity 5.3.

The engine’s Transform component – used to move every object in the scene around – has also been rewritten, again resulting in a speed boost of 2.5 times between Unity 5.3 and Unity 5.6.

There is also a new C# job system which provides built-in debugging when writing multithreaded code.

In the keynote – you can see it at around 00:30:00 in the video at the top of this story – Unity Technologies CTO Joachim Ante showed a flocking simulation of 20,000 fish running at over 30fps within Unity.

Ante commented that the work paves the way for much more complex games like MMORPGs to be built within Unity, citing 15-man start-up Visionary Realms’ Pantheon: Rise of the Fallen as an example.

“It feels like we’re at an inflection point where the engine is about to scale up to the next level,” he said.

No date specified: new asset pipeline, including background asset loading

Ante also showed off Unity’s work-in-progress asset pipeline, intended to make it possible for artists to continue working in the editor while the assets for a project are loading in.

The new pipeline, which is “still a while out in the future” will mean that the editor becomes accessible again once the live assets in a project have loaded in.

The other assets in the project folder will then be loaded in the background.

Unity Technologies is also working on a ‘hot reload’ feature intended to allow VR and mobile developers to make changes to a project and see them reflected in the build in real time.

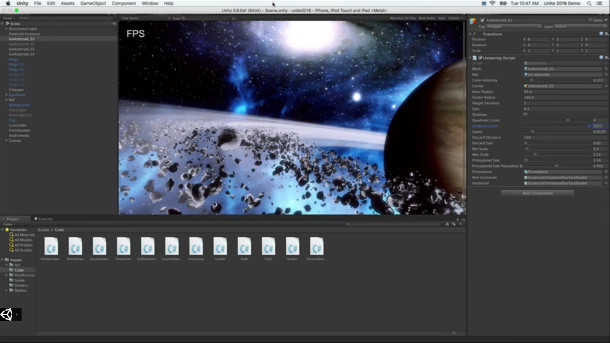

Unity 5.5 introduced CPU instacing in Metal. This demo displays 12,000 asteroids at 30fps.

In Unity 5.6: Vulkan support, compute support for Metal

The company has also announced new or extended support for two key mobile graphics APIs.

Unity 5.6 will introduce native support for Vulkan, the open standard intended as a ground-up redesign of OpenGL. An early preview build was released in September.

The update will also “deepen” Unity’s support for Apple’s rival Metal API, used on iOS devices.

The current Unity 5.5 beta introduced support for CPU instancing, shader cross-compiling and native shaders within Metal, to which 5.6 will add new compute capabilities.

A new Metal editor and tessellation support will follow some time in 2017.

Outside Unity, in open beta: the Unity Connect job marketplace

Finally, one of the new services unveiled in the keynote exists outside Unity itself.

The new Unity Connect online ‘talent marketplace’ is a searchable database of Unity developers, listing their key skills and providing samples of their previous work.

Developers looking to recruit for a project can search the database, or post job listings. The service is currently in open beta, and creating a portfolio is free.

Read Unity Technologies’ own summary of its Unity Los Angeles 2016 keynote announcements