Sneak peek: Adobe’s Project Sweet Talk and Go Figure

As well as being a platform to announce its new product releases, the Adobe MAX conference gives Adobe to showcase some of its more blue sky research.

This year’s Sneaks session, the recordings of which are now available online, showed off 12 new technologies that may – or may not – make it into Adobe’s commercial tools in future.

Some are more geared to consumer photography or audio than professional graphics work, but three particularly caught our attention this year, all of them trained using Adobe’s Sensei AI technology.

Project Sweet Talk: automated facial animation from any audio file and source image

Automatic generation of facial animation from recorded speech isn’t a new concept: Adobe already uses it in Character Animator, its puppet-based 2D animation software.

However, the system requires a certain amount of set-up, including the creation of a set of mouth shapes for a character, which the software displays when it detects the corresponding phoneme in the audio file.

Project Sweet Talk aims to do the same thing, but without the set-up.

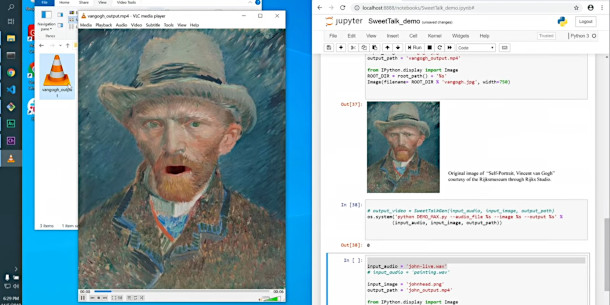

The system works with any unprepared image, identifying and deforming facial features to generate mouth shapes and, to a lesser extent, eye and brow movements matching the audio file.

Adobe’s demo shows the system in action with a range of images: a simple sketch of a cat, a Van Gogh self-portrait and – after a few technical hitches – a caricature of Sneaks’ co-host, the comedian John Mulaney.

Project Go Figure: transfer full-body animation from live footage to a 2D character

A second demo, Project Go Figure, extends the workflow used in Character Animator, in which a video feed of a live performer drives a 2D animated character, from facial animation to full-body motion.

The system processes video footage of an actor, tracking 18 key points on the head, torso and limbs, and generating a roto mask around the entire body.

The motion is then applied to a character within After Effects.

The workflow will already be familiar to users of of markerless motion-capture systems, like those developed by iPi Soft, although it’s interesting to see it used on a 2D rather than 3D character.

The system also works with standard camera phone footage, rather than requiring multiple camera views, or a device with an active depth sensor.

Project Image Tango: generate new stock photos matching a source outline and texture reference

Another AI-based technology, Project Image Tango, mixes two assets to create a new image that has the “detailed shape of one and the texture of the other”.

Adobe showed the system in use with a standard stock photo for the texture image.

Feeding in a second image representing a similar object in a different pose, or seen from a different angle, generated an output image with the overall look of the texture image, but the profile of the shape image.

The system even works with drawn images: another demo showed a crude outline sketch being used to generate a ‘photographic’ image of a bird in a matching pose.

As well as generating custom reference images for CG work, the system has potential for generating design variants: one demo showed a photo of a dress being customised with a separate set of fabric textures.

Other new technologies for relighting photos and animating text

Other tech demos shown during the Sneaks session included Project Light Right, a system for relighting photographs based on 3D information extracted from multiple views of a scene, and Project Fantastic Fonts, which can automatically generate animated distortion of text based on the motion of a mobile device.

You can find more details via the link below, which includes recordings of all 12 projects.

Read more about Adobe MAX 2019’s Sneaks session on Adobe’s blog