Q&A: Autodesk’s Project Skyline team

Unveiled at GDC last week, Autodesk’s Project Skyline aims to provide an industry-standard platform for non-programming-savvy artists to view the impact of animation changes directly within the games engine.

In a world in which the number of games in development increases annually, but staffing levels and turnaround times do not, the only sensible solution is to recycle.

But it’s not easy being green. Assets created for one game may behave unpredictably when deployed in another, particularly when old animation data meets a new physics engine. As Autodesk product manager Eric Plante puts it: “Animation combined with code is where everything tends to go pear-shaped.”

It is this problem – and more generally, the need for artists to interact with content directly in the game engine – that Autodesk’s Project Skyline, is designed to address. Announced publicly at GDC last week, and still very much a technology in development rather than a commercial solution, Skyline aims to provide an environment in which artists can build and edit interactions for their characters without having to get programmers involved.

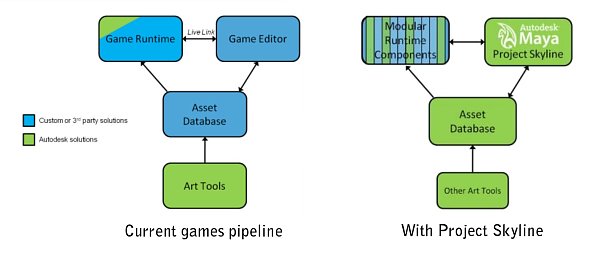

The ‘martini glass’ structure of modern pipelines. Note the ‘golden triangle’: the top three stages in the diagram.

Autodesk describes the structure of the modern games pipeline as the ‘martini glass’. Rather than a traditional linear workflow, in which assets are exported from the art tools to the level editor, and from there to the game engine – a slow, one-way and largely trial-and-error process – studios are increasingly attempting to create a live link between the level editor and the game engine. Draw the components out on paper, and the pipeline resembles an inverted triangle with a vertical stem.

This arrangement provides instant feedback on the interaction between authored and runtime data, but the tools necessary to sustain it are proprietary and therefore usually costly. Project Skyline is intended to provide a less expensive out-of-the-box alternative.

Its UI will be familiar to Maya users; its node-based programming philosophy to those of Softimage’s ICE system. And if it just happens to enable Autodesk to “own the golden triangle” – the company’s own term for providing the industry-standard tools in the top part of that martini glass – so much the better.

That tradeoff – between the benefits of an industry-standard toolset and the risk of a one-size-fits-all-solution controlled by a single software developer – was just one of the issues we discussed when we met up with Autodesk last month.

We spoke to Eric Plante and Autodesk’s senior product manager – and Ubisoft and Digital Domain veteran – Mathieu Mazerolle about how Project Skyline has evolved, and where the company intends to take it next.

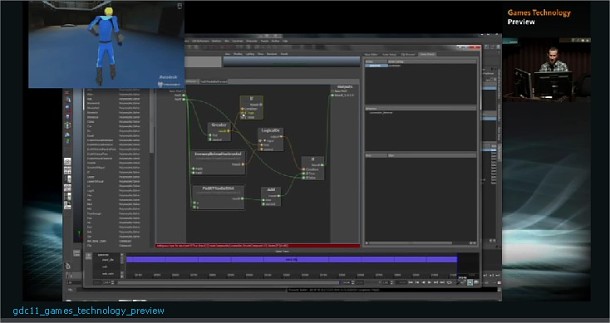

Project Skyline’s game trace view shows animations being combined as the game plays.

CG Channel: How did Project Skyline come about?

Mathieu Mazerolle: When I arrived at Autodesk a little over two years ago, there were a variety of ‘science lab’ type projects going on inside the company. There was a parallel computing technology called Amino: a node-based technology to address massively parallel computing architectures. And we had the HumanIK [middleware], which was a biomechanical model of a human. There was talk of breaking HumanIK into something more modular so it didn’t have to [be used solely for human characters], and applying Amino to represent those different solvers.

But we realised that it would have been a mistake to build a standalone tool to allow people to author Amino. The right idea was to have Amino running on game consoles and to have a tool that then discovers what’s going on. But ultimately, all of this IK stuff [like HumanIK] doesn’t mean anything unless you have the keyframe animation feeding into it, so we said: “Why don’t we take the next step, and actually get all that keyframe data in there?” That was our mental evolution for Skyline.

CGC: What were the crucial changes as the system evolved?

MM: There were two. The first one was this idea that the tools should discover what’s going on in the runtime. That’s the essence of ‘live link’: the idea that assembling stuff in the tool and then exporting it out of a game engine to run in runtime was the wrong way of approaching [the problem]. The second was that we had to involve the animator much more.

CGC: What’s the architecture of Skyline? Is it based on Maya?

MM: The user interface tool is based on a lot of the Maya UI, and a lot of the components there are really pieces [of Maya]. But the asset framework is technology that’s been kicking around in Autodesk for a while: the DNA infrastructure. We’ve evolved that very fast over the past couple of years to address the needs of Skyline.

And the third component is the Amino run-time component. Really, Skyline is an assembly of various Autodesk technologies into something cohesive […] that solves an industry-wide workflow problem.

CGC: But if the look and feel come from Maya, don’t you run the risk of alienating your 3ds Max and Softimage users?

MM: We get asked that a lot. The first customer we showed this iteration of Skyline to was actually a Max facility. But people do understand that [we’re still just exploring an idea] and we can’t do things equally well on three different platforms.

Most of our customers have to bring all of their Max data into their own pipeline anyway, which tends not to have all of the features of Skyline. Generally, what they have in-house is much more degraded in terms of user experience. So Skyline is still a step up, and doesn’t interfere with their existing content creation tools.

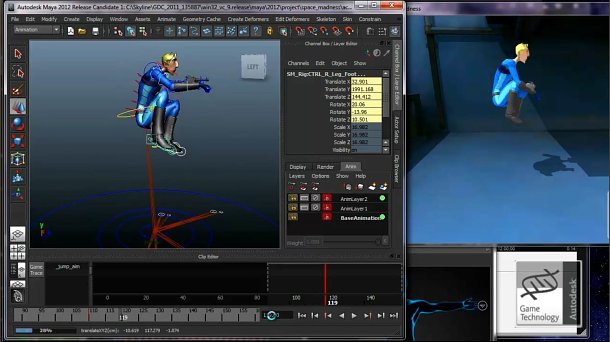

A source animation being modified as the game is running. Changes are immediately picked up by the engine.

CGC: Given that Autodesk owns so much of the content-creation market, and is now trying to own the ‘golden triangle’, won’t people feel locked into a single vendor?

MM: I think trusting one vendor can be seen as a good thing in terms of providing a lot of the common pieces. Autodesk is a very humble servant to the industry. We don’t have any designs on taking over anyone’s pipeline. The reason we want to provide more pipeline [tools] is that there are compelling market forces asking for [them] to be created. We’re just responding to something that is already a reality in the industry.

CGC: So what does Project Skyline have to offer studios facing that reality?

MM: With a traditional animation system, you have to worry about making every single little thing you want to animate [talk to the physics system]. Because Skyline is all data-driven – it’s all done through a node-based [authoring system] – the point of contact is really between the AI system and the animation system. Internally, we call this the ‘brain-body separation’.

Say I shoot you in the game. That’s an AI event, and you can implement that by just having an animation of [your character starting to tip over]. That establishes the contract between the AI and the animation system. If you later want to refine that ragdoll physics, have different animations based on the direction, an artist should be able to do that without having to interact with a programmer. Today that’s not possible, but Skyline enables you to do that in any pipeline.

CGC: Which engine providers are you working with?

MM: We have relationships with all kinds of engine providers, at many different kinds of levels. That’s something we look forward to continuing. A big part of delivering good economy is not having to have every single customer of that engine have to do [the integration] themselves slightly differently.

CGC: Autodesk has acquired a lot of middleware companies over the years. Why hasn’t it tried to acquire an engine provider itself?

MM: It’s just not part of our strategy. We’re [still] at the stage of trying to figure out workflow. We’ve figured out what our customers want a good pipeline structure to look like and we’re figuring out how to build it. Rendering engines, audio – these are just not things we need to deal with at this stage. And honestly, there are so many opportunities to collaborate with existing engines that it’s not an issue for us.

Eric Plante: And since most of our customers [and over 80% of titles] are using their own engines, they’re not looking for a complete replacement. They have existing franchises, existing assets that are built for existing pipelines. With something like Project Skyline you can come in with a ‘renovation’ that is not too disruptive to existing workflow. We’re not asking for them to throw everything they’ve built over the years on the scrapheap.

CGC: Now that you’ve announced Project Skyline publicly, what’s the next step in development?

MM: Customer feedback. People can now play with the technology in a limited way – I don’t think anyone’s going to build a game with it [yet] – but once we can have people other than us hammering on it, that’s going to be very gratifying.

CGC: What specific types of feedback are you looking for?

MM: Peformance, usability, workflow, collaboration. A lot of the niggly wigglies. We have a good solid foundation, but we don’t want to rest on our laurels. These things can live or die based on the quality of the workflow, which [can be the result of quite] small decisions.

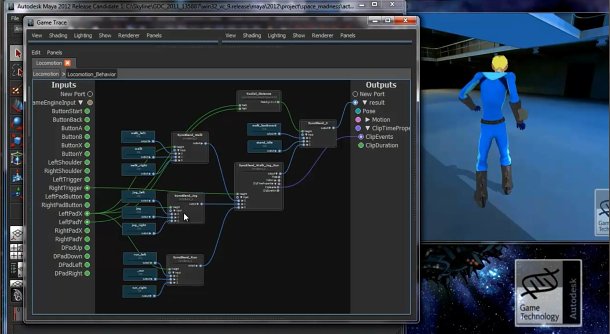

Project Skyline adopts a node-based visual programming workflow. Here a new animation is added to the blend tree while the game is running.

CGC: What aspects of the technology are you most and least confident about?

MM: The node-based workflow is pretty solid. [Softimage’s] ICE has broken the ice in terms of visual programming environments. Leapfrogging off [years of research] is going to give us a huge advantage.

Where am I worried? I’m always worried about performance. Not because I think we won’t have it – we’ve got some cool ways of doing parallel computing that will run circles around what our customers can do by hand – but the demands are so extreme we have to be willing to address them.

EP: It’s easy to demonstrate workflow. Customers can see that we ‘get it’. But the one thing you can’t demonstrate until you actually put the code in their hands is performance.

CGC: Which platforms have you tried Project Skyline on?

MM: Right now, we’re concentrating on the big two consoles. Our last demo happened on PS3 with everything controlled by Maya, and it was very cool to see the Skyline system talking to a console directly. We were running a lot of the stuff on SPUs, all the solvers. That’s pretty cool for something that’s at such an early stage.

[Windows] is extremely well supported as well, since it’s what we develop on internally. Some of our developers just work with PCs because it’s convenient, and there’s a low cost – dev kits do cost money.

CGC: And what about mobile devices?

MM: It’s going to depend on opportunity. The reality is that there are diminishing returns as you address more and more [platforms]. Given where we’re at, we’re aiming at the big targets: there’s an explosion of mobile devices out there, and it would be a massive sap on resources to address every single one. But do we eventually want to be able to do that, once things become more evolved? Absolutely.