The making of John Carter’s epic digital environments

That John Carter seems likely to go down in film history solely for the amount of money it will lose Disney seems deeply unfair. While historians will debate whether the primary cause was the shortcomings of the plot, Pixar veteran Andrew Stanton’s direction, or simply the curse that had led three generations of film-makers to fail to bring Edgar Rice Burroughs’ proto-sci-fi epic to the big screen, the fact remains that the VFX look stunning.

A movie the equal of Avatar in scope and ambition, if not in box-office takings, nowhere is the scale of John Carter more evident than its digital environments. Much has been made of the concept art, MPC’s recreation of the Warhoon hordes, and Double Negative’s work on the Tharks and other Martian creatures. Yet the second biggest effects vendor, contributing 831 individual VFX shots and stereo-converting 87 minutes of footage, was the one responsible for almost all of its settings: London’s Cinesite.

“Double Negative did some amazing work, and quite rightly [are getting] a lot of attention for it,” says Cinesite’s Sue Rowe. “But I feel like standing at the back and going: ‘Yeah, but look at this glass palace. It’s beautiful.'”

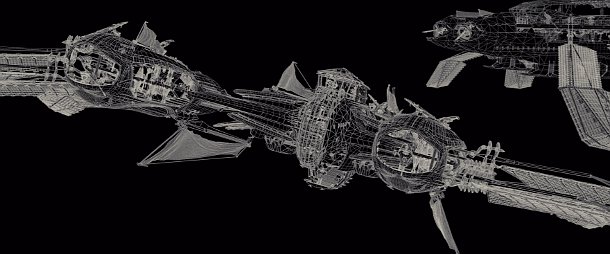

Although its primary responsibility was the John Carter’s environments, Cinesite was also responsible for the movie’s intricate flying vehicles. The largest have up to 5,000 individually animatable tiles in the wings.

Although its work on the movie overlapped that on Battle: Los Angeles and Pirates of the Caribbean: On Stranger Tides, John Carter was by some way Cinesite’s largest project to date.

“It’s the most complex show we’ve worked on,” says Rowe who, in addition to heading up the Cinesite team, also served as the movie’s overall co-VFX supervisor. “We’ve done films that have 500-800 shots before, but they were quite often in the same environment, so we’d be using the same asset time and again.”

No such luck on John Carter. To bring the tale of a US Civil War veteran transplanted to Mars to find love among the planet’s warring nations, Cinesite created four key environments: the mile-long walking tanker that is Zodanga; the rival city of Helium, with its central glass palace; the giant airships that are the primary combatants in the war between the two states – and which, sadly, space is going to preclude us talking about in detail here; and the Thern Sanctuary – a huge underground cavern filled with self-organising, semi-organic nanomaterial.

The work encompasses dust simulations, cloth animation, digital doubles, background crowd work in almost every city scene, and the all-digital powers of ten shot that opens the movie, as the camera plunges down from outer space. Mere set extension, this is not. And it is this work – and the visual effects team’s repeated mantra that ‘Close enough is not good enough’ – that seems likely to define what is best about John Carter.

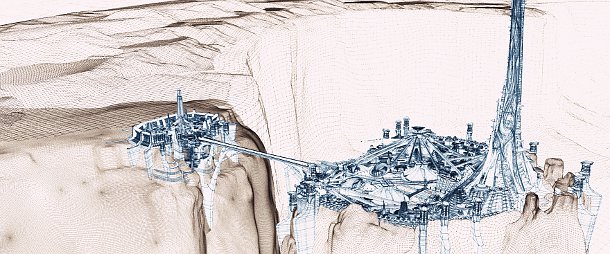

The city of Helium. The challenge faced by Cinesite was not simply the scale of the environments, but the level of detail in each one: a problem that resisted conventional cheats such as matte work or 2D elements on cards.

For Cinesite, the main problem on John Carter was the sheer scale of the environments. “The assets we ended up building were huge, and they have to stand up to multiple different resolutions,” says Rowe. “We tried to be efficient, but the usual cheats – using matte painting, 2D elements on cards, and so on – just didn’t work.”

The scale of the problem can immediately be seen when examining stills of Zodanga, with its detailed buildings, huge structural elements and numerous background props, lanterns and flags. Individual shots of the mechanised city contain up to 20,000 separate elements, sending the total poly count up towards 2 billion.

Managing this huge volume of data was Cinesite’s primary challenge on the movie. The difficulty, says Jon Neill, VFX supervisor on Zodanga – the project was so large that the studio assigned seven separate supervisors to individual sequences – was not ensuring that the shots rendered in a reasonable amount of time: it was ensuring that they rendered at all.

The birth of MeshCache

To cope with the work, Cinesite completely rearchitected its geometry caching system, developing its own proprietary format, MeshCache.

“Because a lot of these props were animated or required a dynamic simulation, we could not simply bake them into render archives,” says Neill. “Unlike the traditional caching systems that require data to be converted between the native and cache formats (that is, between Maya and Alembic), our system supported cache files as a native geometry/animation type in Maya, which allowed us to display, modify and re-cache extremely complex scenes without the overhead of converting data and without duplicating any data on disk or in memory.”

MeshCache was designed from the off as a hierarchical system. “You’d start off by building a prop, say a lamp,” says CG supervisor Artemis Oikonomopoulou, who oversaw the work. “Then you’d build a building, and that would be one cache. And then someone would lay that out, and you’d be left with a cache for the whole city. You were left with all these hierarchical, nested caches.”

“As a concept, it sounds really good, but when we started using it, we were faced with more complications, because the shader [has to access data for objects at the bottom of the hierarchical tree]. Each object had a file with all the shading properties, and we built tools to access it even from the very top, so the lighters could have control at any point.”

“We used custom ‘resource’ files to define the shading properties of all rendering assets – these allowed us to hide the complexity of shader setup from the artists, whilst giving them an option to override any shading parameter on any asset,” explains Neill. “Since all shading properties in resource files were assigned to geometry using metadata filters, we could update models and animation or FX rigs without worrying about breaking lighting rigs.”

On top of this, Cinesite added extra functionality, such as levels of detail. Most buildings in the city use three levels of detail. The studio developed proprietary tools to analyse the visibility and resolution of rendered geometry and select the optimal LoD.

The project was a huge development effort for studio: work on the system began in January 2010, but according to Oikonomopoulou, it was not “fully manageable” until six or seven months later.

The complexity of environments such as the city of Zodanga (pictured above) forced Cinesite to develop its own caching format, MeshCache. Caches are hierarchical and supported as a native geometry type in Maya.

The system enabled Cinesite’s modellers to work on props at a resolution suitable to be seen in close-ups, then arrange the props into ‘little sets’. Modelling was done in Maya, with Mudbox used to sculpt detailed displacement maps. Texture work was primarily done in Photoshop, with a little additional ‘dirtying up’ in Mari.

Cinesite also made use of photographic reference material, which should lead to a certain familiar frisson for any London residents studying the surface of Mars. “We grabbed textures from buildings all over the South Bank,” reveals Neill. “The Royal Festival Hall’s probably in there somewhere.”

The team worked at texture resolutions of up to 8k, making use of tiling to reduce the size of individual maps. The large support pillars in the city can make us of up to 30 2k tiles.

As a giant, myriapod-like walking ‘tanker’, the city itself required its own movement animation. Rather than have to animate Zodanga’s 674 mechanical legs individually by hand, Cinesite used timed animation caches to offset standard motion cycles. Animators could manipulate proxy versions of the legs, each with its own offset curve. The setup would then pick a base movement at random from a library of subtle variants, and apply the offset. Variations in secondary movements, such as cogs and cabling, added further variation.

The prominent puffs of dust as the claw at the foot of each leg strikes the ground were also largely simulated in 3D. Using Maya Fluids, Cinesite built up a library of standard simulations from which lighters could choose at random, turning round individual elements where necessary to avoid visible repetition. The larger trail behind the city itself, christened a ‘Buffalo Trail’ by the studio – “You know, when you have a herd of buffalo [sweeping across the savannah], and it leaves a trail of dust behind it.” – was simulated in Houdini. Maya’s native nCloth system supplied the numerous flags above the city streets, while background crowds – often noticed only subliminally on first viewing, but always present – were generated in Massive.

Paradoxically, although the cumulative complexity of such scenes formed Cinesite’s biggest challenge at the start of production, by the end, it was actually working in the studio’s favour.

“If we had gone for an easier pipeline, it would have been more difficult for us to sell the [results to Disney],” says Neill. “Even though it took more time to render, we ended up getting the shots out of the door quicker.”

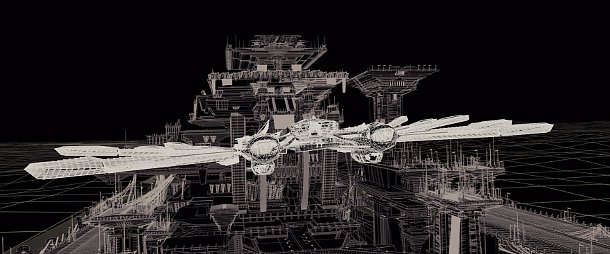

The all-glass Palace of Light: the jewel of the city of Helium, but an enormous render challenge, calling for rigorous use of caching to make calculating reflections and refractions manageable in the time available.

Render times – already high – were further compounded by the degree of photorealism the demanded by the production. “It’s hard to believe, but it’s all raytraced,” says Neill. Although Cinesite tried standard cheats such are rendering scenes in sections and arranging the output on cards during the composite, ultimately its sole concession was to prebake the GI.

With an 800-frame shot taking anywhere up to a week to render on the hundred or so processors typically available on Cinesite’s farm, Neill’s team was forced to output batches of 40 frames at a time so the overall look could be signed off before completing a full render.

“Even for major shots, we’d render perhaps six times in total,” he says. “On previous shows, we’d just render, render, render, maybe hundreds of times.”

A glass half full?

The problem was even more acute for the 197 shots set in Helium that feature the city’s centerpiece: the Palace of Light. Described by Andrew Stanton as the ‘jewel of the city’, the palace is a cathedral-like space with solid vertical ribs supporting the ‘feathers’ – vast glass wall panels – and topped off with mirrors and a central lens. To complicate matters, particular attention had to be paid to matching the frosty look of the glass used on set during the live shoot.

“The shader team tried so many things, but we were at the point where it was taking 40 hours to render a single frame,” says Artemis Oikonomopoulou.

Again, the solution here was to cache data. Cinesite’s setup was optimised to precache the objects visible to the camera, then call on the cache for reflections and refractions. And for once, a certain amount of conventional trickery was used, with far-off panels being rendered out as textures. The twin-pronged attack worked, cutting render times to around two hours per frame.

“Rendering glass is not rocket science. It’s done well and often in CG,” says Sue Rowe. “But the challenge we had was to do it as a company of our size, with the deadlines we had. I’m very pleased with the work we did to make things as efficient as possible.”

The Thern Sanctuary, showing the underlying low-level geometry (top) and a final shot (below). The Thern itself, a self-organising nanomaterial, is all procedural geometry, generated by the 50-year-old Dijkstra algorithm.

Another major challenge was the 82-shot sequence that takes place in the Thern Sanctuary: a vast hemispherical chamber whose walls and floor are composed of the Thern itself: a moving, changing nanomaterial midway between an organic structure and a self-organising machine.

“It was an enormous render challenge,” says Rowe. “The rooms are huge – 90 metres wide, 90 metres high – and it [the Thern] had to go off into infinity. That’s what the director wanted it to look like, at any rate. If we’d actually rendered it to infinity, we’d still be going now.”

Look development for the scene proved a major challenge. “It’s just a paragraph in the script: ‘The camera pulls back and the scene is alive with electricity’,” says VFX Supervisor Simon Stanley-Clamp, who was brought in to finish up the sequence midway through production.

The key visual reference was not obvious organic reference points as coral or neural networks, but fiber optic cabling. Through procedural shading, augmented in Nuke, Cinesite was able to create pulses and flashes of light deep within the Thern, with an orange internal glow mimicking trapped ambient light.

Dijkstra’s algorithm

The Thern itself is composed of successive layers of Houdini procedural geometry: an underlying scaffold layer to give the basic form, then successively finer and finer detail. To generate the branching forms, Cinesite tried standard solutions such as L-systems or Voronoi noise but found that the results were either too regular, or too difficult to animate in shots in which the growth of the Thern has to be directed by hand. Ultimately, the solution to this futuristic challenge lay in research over 50 years old: Dijkstra’s algorithm, formulated in 1959, and now usually used for routing internet communications, produced the perfect growth forms for the nanomaterial.

Oikonomopoulou describes the system as effectively a series of hair curves that are replaced with cylindrical geometry at render time, with normal blending used to generate fine strands. For once, render times were not the major problem: while individual shots might take only hours to render, it could take two or three days of caching to get to that point, generating literally terabytes of data for a single interation of an animation.

“It was really frustrating sometimes,” says Oikonomopoulou. “You’d have your animation approved [on Monday], and it would take until the end of the week until you had it ready in lighting to get to a comp – and then the animation would change and you’d be back a week.”

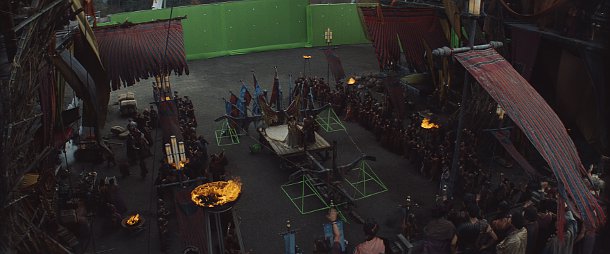

From greenscreen shoot (above) to final digital set (below). Spanning not only buildings but cloth and crowd simulations, Cinesite’s environment work creates a vision of Mars as a rich, dynamic, constantly evolving world.

Distance yourself from the struggles of the artists – and from the media coverage – and John Carter is very much a success: visually, if not financially. Cinesite’s environments evoke a rich, dynamic world whose full complexity emerges only over repeated viewings, and one can only hope the movie gains a second life on DVD.

Although critics have suggested that Stanton has stumbled in his transition from animation to live action, Sue Rowe is full of praise for the director’s approach to visual effects.

“Andrew brought a kind of decisive authority to the project,” she says. “Obviously, he’s a very good animator, and he knows his CG science, so you can have a very frank conversation with him [about what is technically feasible]. He’s also very visually articulate. [With a less tech-savvy director], it would have been far more painful, and there would have been far more costly delays.”

Having worked on John Carter since 2009, Rowe also credits Stanton for that rarest of things in modern visual effects production: a schedule measured in years rather than months.

“Deadlines are so tight now on VFX movies that you’ll often meet a producer who’ll boast that on [movie X], they didn’t even use the greenscreens, or that they posted it in six weeks, and you really want to say. ‘Yeah, but it looked crap.’ But the great thing about Andrew is that he knows how long these things really take.”

Which only makes John Carter’s predicted $200 million box-office shortfall more disappointing. Productions with this kind of approach to effects are few and far between, and it is easy to speculate that Hollywood will conclude that to focus on visual quality over speed is an unnecessary financial risk.

As Rowe puts it: “When Andrew was briefing us at the start, he said, ‘I’m not doing a science-fiction movie. I’m doing an epic tale of love, of betrayal, of loss.” It would be a shame if John Carter’s legacy in the industry was only the last of the three.

Visit the official John Carter website

All images: ©2011 Disney. JOHN CARTER™ ERB, Inc.